git-bigfiles - Git for big files

git-bigfiles is our fork of Git. It has two goals:

- make life bearable for people using Git on projects hosting very large files (hundreds of megabytes)

- merge back as many changes as possible into upstream Git once they’re of acceptable quality

Features

git-bigfiles already features the following fixes:

- git-config: add

core.bigFileThresholdoption to treat files larger than this value as big files - git-p4: fix poor string building performance when importing big files (git-p4 is now only marginally faster on Linux but 4 to 10 times faster on Win32)

- git-p4: do not perform keyword replacements on big files

- git-fast-import: do not perform delta search on big files and deflate them on-the-fly (fast-import is now three times as fast and uses 5 times less memory with big files)

- git-pack-objects: do not perform delta search on big files (pack-objects is now 10% faster, but there is no memory gain yet)

Results

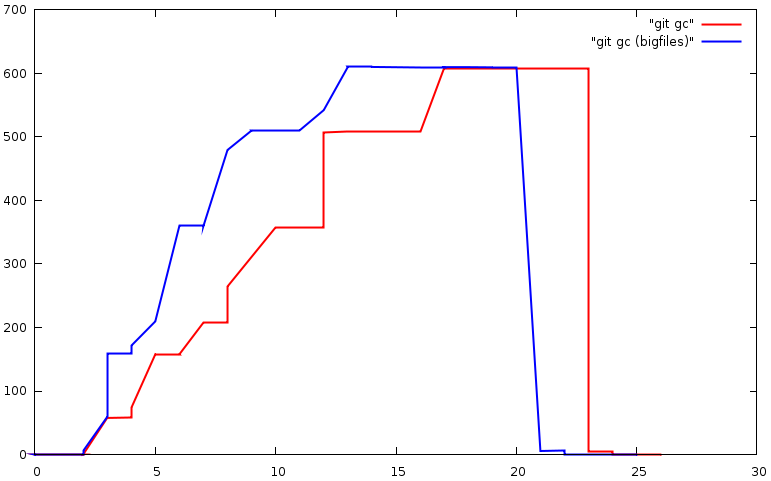

Git fast-import memory usage and running time when importing a repository with binary files up to 150 MiB, then git-gc on the same repository:

Development

git-bigfiles development is centralised in a repo.or.cz Git repository.

Clone the repository:

git clone git://repo.or.cz/git/git-bigfiles.git

If you already have a working copy of upstream git, you may save a lot of bandwidth by doing:

git clone git://repo.or.cz/git/git-bigfiles.git --reference /path/to/git/

The main Git repository is constantly merged into git-bigfiles. See the git-bigfiles repository on repo.or.cz.

To do

The following commands are real memory hogs with big files:

git diff-tree --stat --summary -M <sha1> HEADgit gc(as called bygit merge) fails with warning: suboptimal pack - out of memory

Readings

- 2006/02/08: Linus on Handling large files with GIT

- 2006/07/10: Revisiting large binary files issue

- 2008/09/09: malloc fails when dealing with huge files

Attachments (3)

- git-bigfiles.png (57.8 KB) - added by 17 years ago.

- fast-import-memory.png (26.9 KB) - added by 17 years ago.

- pack-objects-memory.png (17.4 KB) - added by 17 years ago.

Download all attachments as: .zip